|

Then to train the final model, we can increase the amount of noise in the sum, while proportionally scaling up the number of clients per round (assuming the dataset is large enough to support that many clients per round). Our strategy will be to first determine how much noise the model can tolerate with a relatively small number of clients per round with acceptable loss to model utility. 2021, Differentially Private Learning with Adaptive Clipping, so no clipping norm needs to be explicitly set.Īdding noise will in general degrade the utility of the model, but we can control the amount of noise in the average update at each round with two knobs: the standard deviation of the Gaussian noise added to the sum, and the number of clients in the average. Second, the server must add enough noise to the sum of user updates before averaging to obscure the worst-case client influence.įor clipping, we use the adaptive clipping method of Andrew et al. First, the clients' model updates must be clipped before transmission to the server, bounding the maximum influence of any one client. To get user-level DP guarantees, we must change the basic Federated Averaging algorithm in two ways. Metrics=)ĭetermine the noise sensitivity of the model. Train_data, test_data = get_emnist_dataset() Return dataset.map(element_fn).batch(128, drop_remainder=False)Įmnist_train = emnist_train.preprocess(preprocess_train_dataset)Įmnist_test.create_tf_dataset_from_all_clients()) # Use buffer_size same as the maximum client dataset size, X=tf.expand_dims(element, -1), y=element) def get_emnist_dataset():Įmnist_train, emnist_test = .load_data( hello_world():ĭownload and preprocess the federated EMNIST dataset. Please refer to the Installation guide for instructions. import collectionsĮxample to make sure the TFF environment is correctly setup. We will use tensorflow_federated, the open-source framework for machine learning and other computations on decentralized data, as well as dp_accounting, an open-source library for analyzing differentially private algorithms. Some imports we will need for the tutorial. pip install -quiet -upgrade dp-accounting pip install -quiet -upgrade tensorflow-federated Before we beginįirst, let us make sure the notebook is connected to a backend that has the relevant components compiled. If we used more training rounds, we could certainly have a somewhat higher-accuracy private model, but not as high as a model trained without DP.

For expediency in this tutorial, we will train for just 100 rounds, sacrificing some quality in order to demonstrate how to train with high privacy. There is an inherent trade-off between utility and privacy, and it may be difficult to train a model with high privacy that performs as well as a state-of-the-art non-private model. We will train a model on the federated EMNIST dataset.

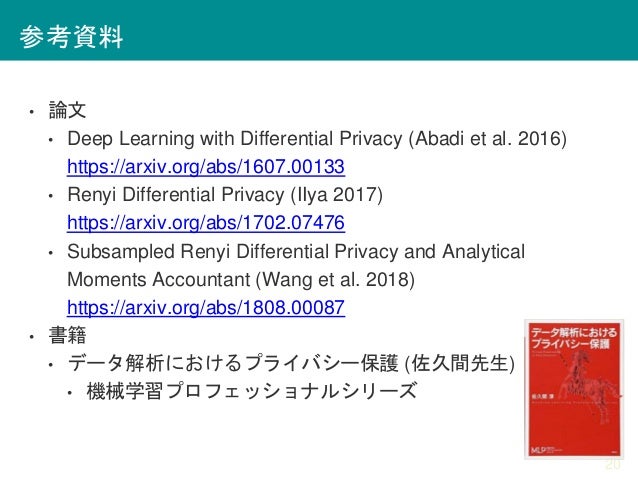

Training a model with user-level DP guarantees that the model is unlikely to learn anything significant about the data of any individual, but can still (hopefully!) learn patterns that exist in the data of many clients. We will use the DP-SGD algorithm of Abadi et al., "Deep Learning with Differential Privacy" modified for user-level DP in a federated context in McMahan et al., "Learning Differentially Private Recurrent Language Models".ĭifferential Privacy (DP) is a widely used method for bounding and quantifying the privacy leakage of sensitive data when performing learning tasks. This tutorial will demonstrate the recommended best practice for training models with user-level Differential Privacy using Tensorflow Federated.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed